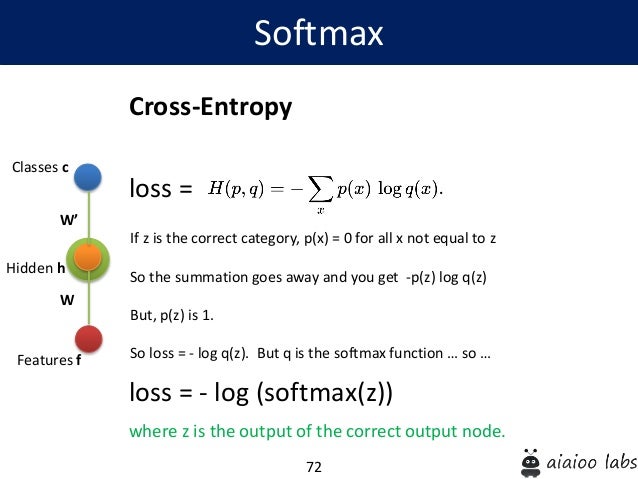

Therefore, to identify the best settings for our unique use case, it is always a good idea to experiment with alternative loss functions and hyper-parameters. While cross-entropy loss is a strong and useful tool for deep learning model training, it's crucial to remember that it is only one of many possible loss functions and might not be the ideal option for all tasks or datasets. To summarize, cross-entropy loss is a popular loss function in deep learning and is very effective for classification tasks. The same pen and paper calculation would have been from torch import nncriterion nn.CrossEntropyLoss()input torch.tensor(3.2, 1.3,0.2. Line 24: Finally, we print the manually computed loss. See Pytorch documentation on CrossEntropyLoss. Line 21: We compute the cross-entropy loss manually by taking the negative log of the softmax probabilities for the target class indices, averaging over all samples, and negating the result. 3.1 Experimental setup Our experimental code was developed using PyTorch 1.12.1. Line 18: We also print the computed softmax probabilities. The classification network is fine-tuned using cross-entropy loss. Line 15: We compute the softmax probabilities manually passing the input_data and dim=1 which means that the function will apply the softmax function along the second dimension of the input_data tensor. target ( Tensor) Ground truth class indices or class probabilities see Shape section below for supported shapes. Parameters: input ( Tensor) Predicted unnormalized logits see Shape section below for supported shapes. The labels argument is the true label for the corresponding input data. This criterion computes the cross entropy loss between input logits and target. The input_data argument is the predicted output of the model, which could be the output of the final layer before applying a softmax activation function. Our model is a custom CRNN-like model built from scratch in PyTorch, but using cross-entropy + auxiliary stop prediction loss instead of CTC loss (10+. PyTorch has standard loss functions that we can use: for example, nn.BCEWithLogitsLoss() for a binary-classification problem, and a nn.CrossEntropyLoss().

Line 9: The TF.cross_entropy() function takes two arguments: input_data and labels. The tensor is of type LongTensor, which means that it contains integer values of 64-bit precision. Line 6: We create a tensor called labels using the PyTorch library. 1 2 def softmax (x): return np.exp (x)/np.sum(np.exp (x),axis0) We use numpy.exp (power) to take the special number to any power we want. Here’s the Python code for the Softmax function. Line 5: We define some sample input data and labels with the input data having 4 samples and 10 classes. The purpose of the Cross-Entropy is to take the output probabilities (P) and measure the distance from the true values. Line 2: We also import torch.nn.functional with an alias TF.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed